Depth of field

Problem Description

Estimating depth from 2D images is often used in scene reconstruction, 3D object recognition, segmentation, and detection. Based on a single RGB image as input, we are predicting a depth map for the entire scene.

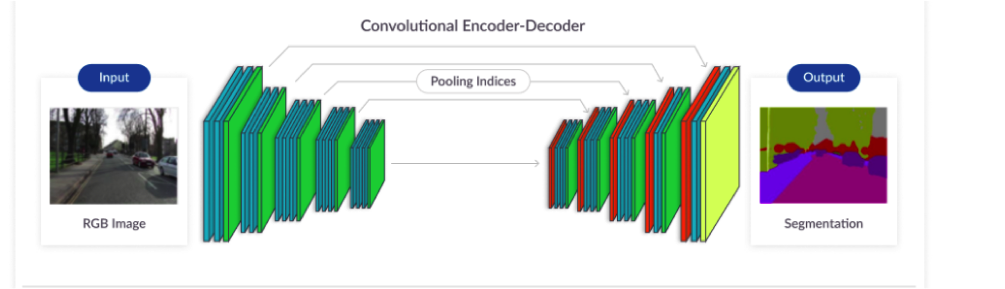

Model

Encoder Takes an input image and generates a high-dimensional feature vector Decoder Takes a high-dimensional feature vector and generates a semantic segmentation mask There are 3 major building blocks: Convolution Down-Sampling Up-Sampling

Examples

In this example, we will show how can we blur part of the background and emphasize the foreground. Starting with the original image:

We are calculating depth map and converting it into grayscale:

Based on the given threshold we extract the foreground and inverted mask

This helps us to make foreground image with transparent background:

When we concatenate this image with blured version of original image:

We get the end result:

Result